By: Jaspreet Bindra, Founder & MD, The Tech Whisperer Ltd, UK

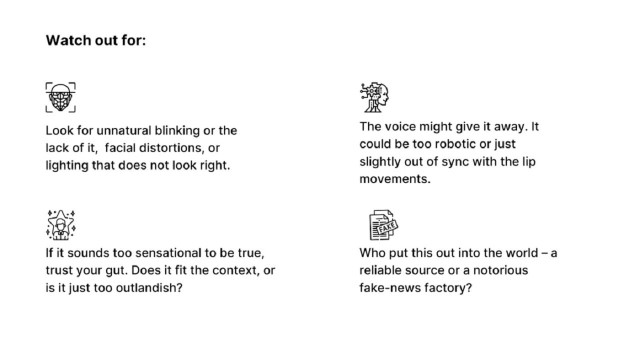

INDIA: The amount of deepfake content online is growing at an alarming rate, with 96% of deepfakes being pornographic. Jaspreet Bindra, Founder & MD of The Tech Whisperer Ltd, UK, warns of the potential dangers of deepfakes, which use Generative Adversarial Networks (GANs) to create lifelike videos that are difficult to distinguish from reality.

With several elections around the corner in India, politicians and political parties could be both creators and victims of deepfakes. These videos could be used to spread misinformation, put political opponents on the spot, or even build an entire campaign to sway voters. The general public could also fall victim to deepfakes, with the possibilities being endless.

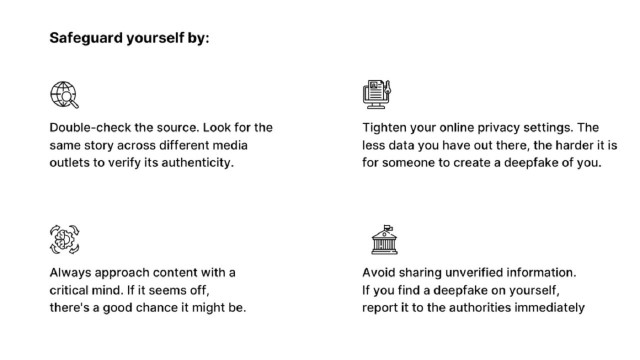

To safeguard oneself from falling victim to deepfakes, Bindra offers tips such as double-checking the source, avoiding sharing unverified information, approaching content with a critical mind, tightening online privacy settings, and reporting any deepfakes to the authorities immediately.

Bindra also calls for stringent regulation with exemplary punishment to offenders, mandating that anyone using an AI model to produce an image or information must disclose it. He also suggests making people aware of Classifiers, software that can detect AI-generated content, and widespread use of the same, much like antivirus.

As an expert in AI and GenAI, Bindra advises several global organizations on strategy related to technology and sits on the Advisory Board for Findability Sciences, a Boston-based enterprise AI firm. He is also the author of two books on tech and is recognized as the inaugural ‘Digitalist of the Year’ by Mint and SAP.

In conclusion, the rise of deepfake content online is a cause for concern, and it is crucial to be aware of the potential dangers and take steps to safeguard oneself from falling victim to this technology.